InstructDiffusion is a unifying and generic framework for aligning computer vision tasks with human instructions.

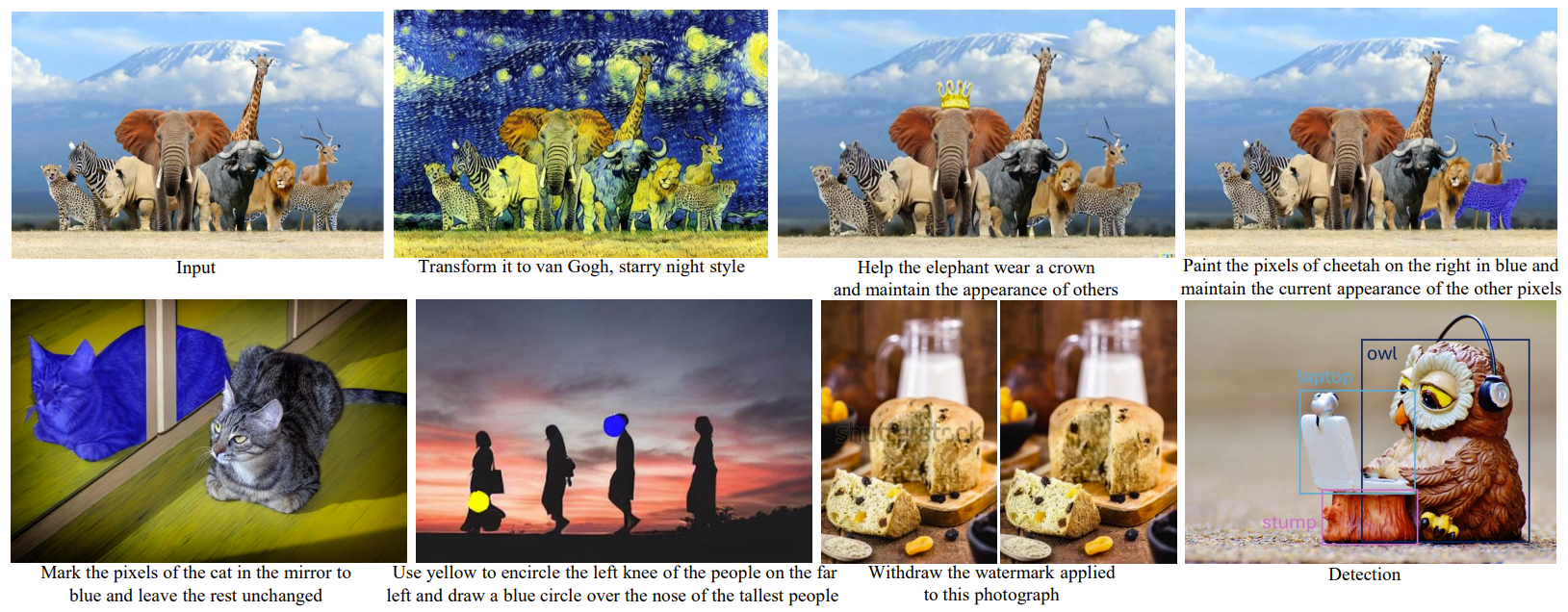

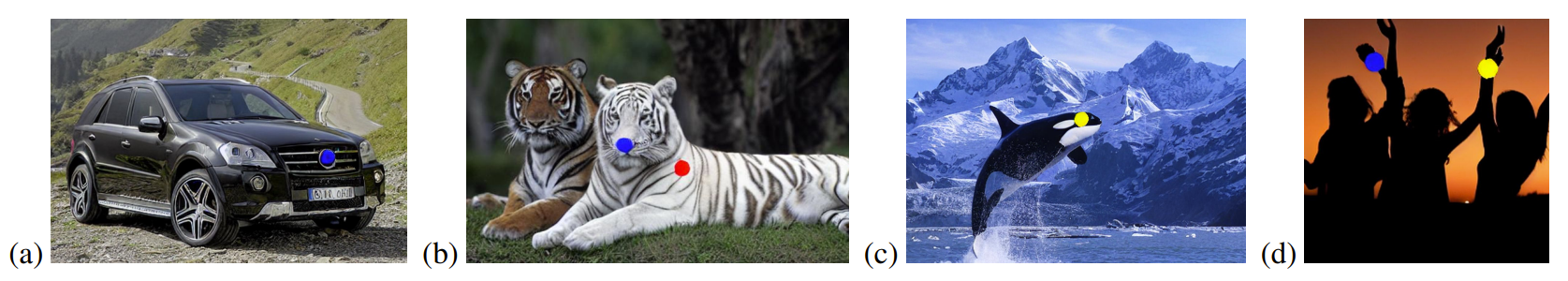

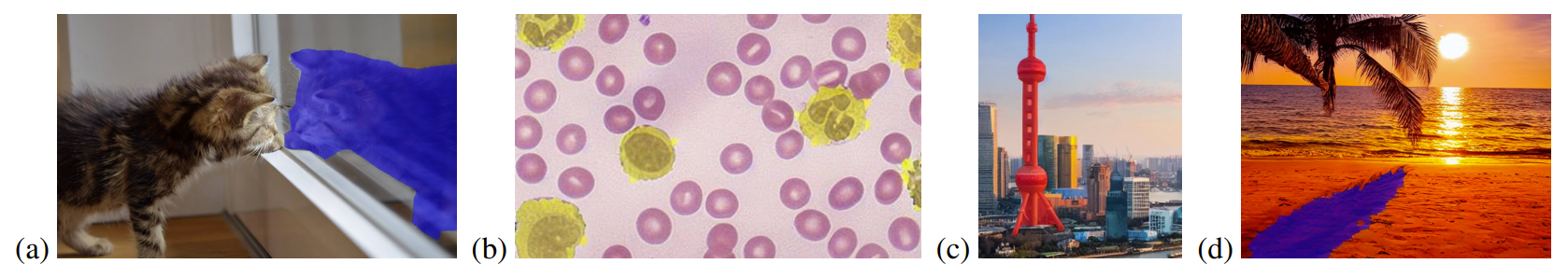

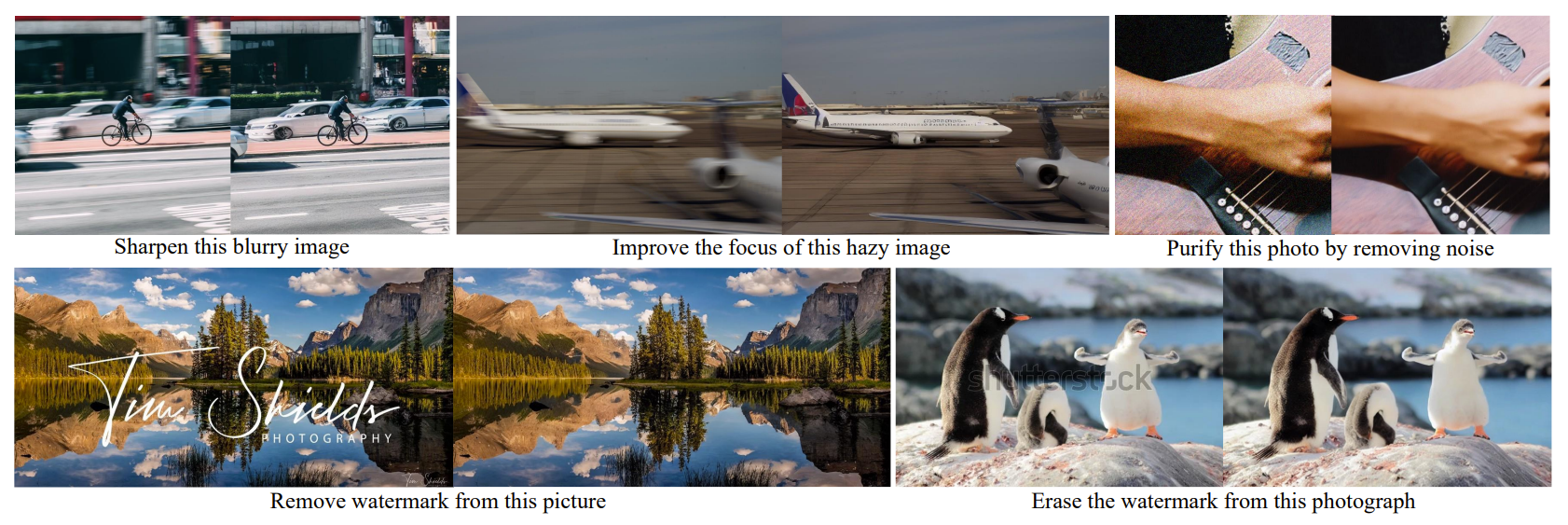

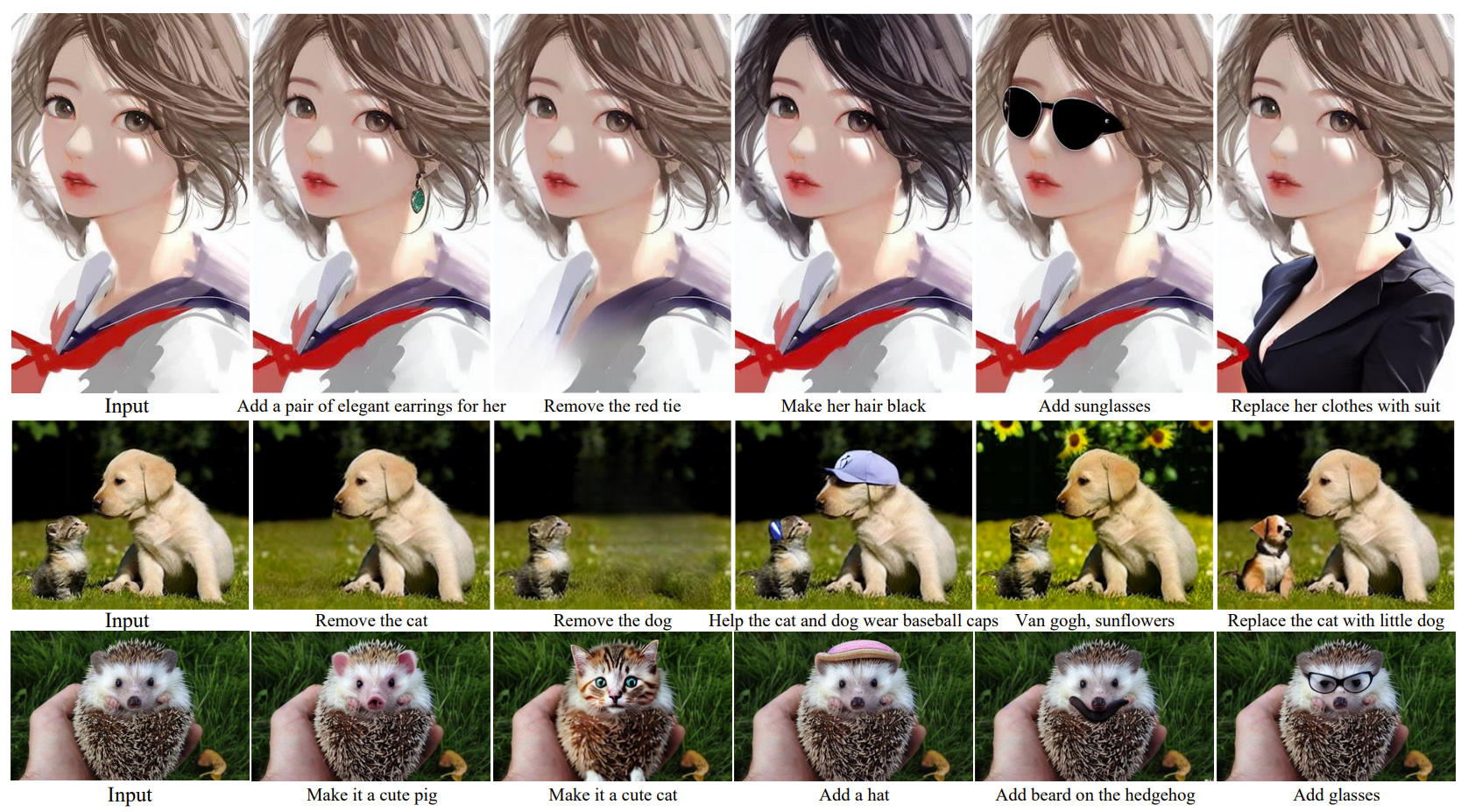

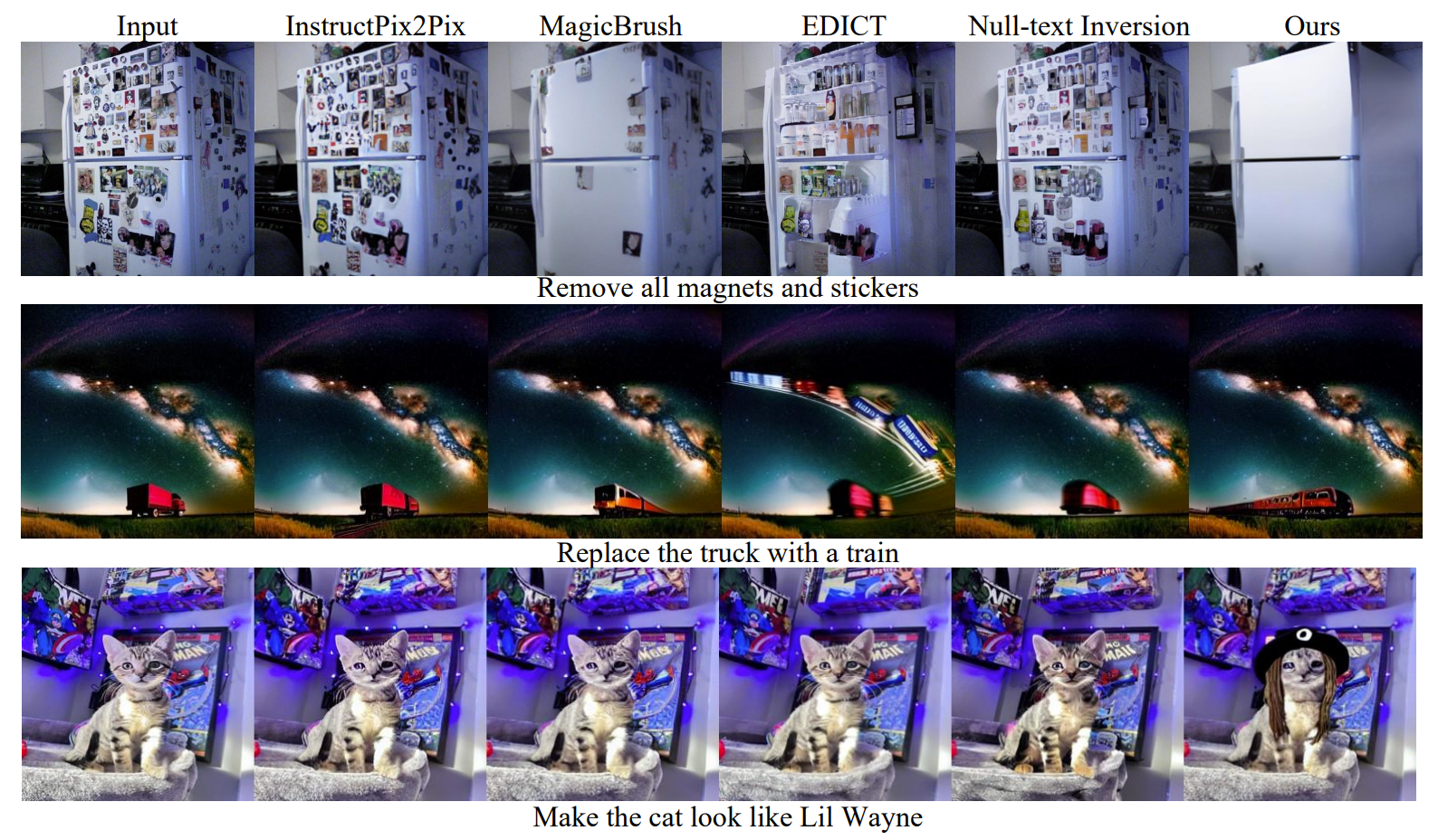

We present InstructDiffusion, a unifying and generic framework for aligning computer vision tasks with human instructions. Unlike existing approaches that integrate prior knowledge and pre-define the output space (\eg, categories and coordinates) for each vision task. We cast diverse vision tasks into a human-intuitive image-manipulating process whose output space is a flexible and interactive pixel space. Concretely, the model is based on the diffusion process and learned to predict the pixel according to user instructions (such as circling the left shoulder of the man with red and placing a blue mask on the left car). InstructDiffusion could handle various vision tasks such as understanding tasks (segmentation and keypoint detection) and generative tasks (editing and restoration). It even demonstrates the ability to handle unseen tasks and outperforms previous methods on unseen datasets. This represents a significant step towards a generalist modeling interface for vision tasks and advancing artificial general intelligence in computer vision.

@article{Geng23instructdiff,

author = {Zigang Geng and

Binxin Yang and

Tiankai Hang and

Chen Li and

Shuyang Gu and

Ting Zhang and

Jianmin Bao and

Zheng Zhang and

Han Hu and

Dong Chen and

Baining Guo},

title = {InstructDiffusion: {A} Generalist Modeling Interface for Vision Tasks},

journal = {CoRR},

volume = {abs/2309.03895},

year = {2023},

url = {https://doi.org/10.48550/arXiv.2309.03895},

doi = {10.48550/arXiv.2309.03895},

}